I’ve been hard on Lisp lately.

Last time I wrote here, I called it a curse — a language so powerful that everyone who touches it ends up building their own private dialect, until no one can quite understand anyone else. A small Tower of Babel, assembled out of parentheses. I still think that’s true.

But I left something out of that post, because it didn’t fit the argument I was making. The same Lisp idea that scatters programmers did the opposite thing to me, once. It didn’t scatter anything. It pulled something very old back together.

It gave me back my ancestors.

Let me explain, because it sounds like more than it is.

I’m not a Lisp programmer by trade. I just love the elegance of the thing — I’ve lived inside Emacs for years, written my own Elisp, poked at Clojure enough to feel what makes the family different. And the difference, the one everyone eventually trips over, is this: in Lisp, code and data are the same kind of thing. A list of symbols is information, or it’s a program, depending only on how you choose to read it. Data is code, and code is data.

Most people find that strange. Some find it beautiful. I was sitting with the strangeness of it one evening when something turned over in my head.

The vakana, I realized, were the same shape.

Vakana is our Malagasy word for beads — and there is no other word for them in my language, which tells you something about how old they are. I grew up knowing two things about them. That they were beautiful. And that they were a little frightening — in Madagascar, beads are tangled up with sorcery, and you learn young to keep a respectful distance.

What nobody told me — what I had to go the long way around to see — is that they were also a tool for thinking.

A bead has a form, a surface, a feeling it carries, a mark. Thread a few together and you have a sentence. Wear them in an order and you have a record. Pass them to someone and you have a message. The bead isn’t only worn — it’s read. And it doesn’t only get read; it does: it anchors a memory, marks an intention, names a person, declares a state. A unit that is at once what you say and what you mean. The exact shape a Lisp programmer knows in his fingers.

It was a small thing to notice, structurally. It was a very large thing for what it implied.

(Added after a reader asked for a concrete example.)

For most of my education I’d absorbed, without anyone ever saying it out loud, that the practices of my ancestors were aesthetic. Or spiritual. Or symbolic — and sometimes those words were just a polite way of saying not quite serious. The real thinking tools belonged to modernity: the journal, the calendar, the database.

But the vakana are exactly that. My ancestors used them the way I use a journal — to hold what happened, what to remember, what to commit to. The way I use a calendar — to pin meaning to a time and a place. The way an engineer uses a schema — to compose meaning out of a few fixed parts. They wore the whole system on the body, where it could be touched and counted without looking.

What I had been taught to see as ornament was the operating system.

There’s a second half to this, and it’s the half that turned the realization from clever into important.

Malagasy is a language made of pictures, and you hear it most clearly in the way we keep time. We don’t say one-thirty in the morning — we say mitrena ombilahy, the bellowing of the bull. Two in the morning is maneno sahona, when the frogs call. Six in the evening is maty masoandro, the death of the sun. (I wrote about this once: in Malagasy, time is told by what the world is doing, not by a number.) Even the turning of the year was read this way once. The month-names we use today are borrowed Arab zodiac, but the older Malagasy ones were small descriptions of the world — Papangolahy lava elatra, the long winged male Papango, for the season the zodiac calls Aries.

And it isn’t only time. The names themselves are descriptions. A tree can be called Mpanjakaben’ny tany, the great king of the earth; the pomegranate is Apongaben-danitra, heaven’s great drum. A person’s name can be a whole sentence — Andriantsimitoviaminandriandehibe: the noble without equal among the great nobles. To name a thing, in Malagasy, is already to draw it. To think in the language is to think in pictures.

I knew, before I made the connection, how much that matters — because I’d wandered into the Art of Memory. The Memory Palace: the old trick that lets a person hold a whole shuffled deck, or a thousand names, by placing vivid images in remembered rooms. It works because memory is built for image and place far more than for word and order. The Greeks thought they’d discovered it. They were late too.

The classical memory artists knew one more thing — that the kind of image matters. An image that frightens or shocks or moves you sticks; a neutral one slides off. And here is the part that stopped me: the vakana names are already charged that way. Tsileondoza — roughly, disaster cannot undo it — is a bead worn against ruin. The whole intention compressed into one frightening word, carried on the body.

So the fear that follows the beads in Malagasy life — the sorcery, the thing I was taught to keep away from — was never a superstition wrapped around a harmless object. It was the technology working. An emotionally inert bead is a worse memory tool than a charged one. My ancestors built their system out of images that stick, and one of the surest ways to make an image stick is to make it a little dangerous.

It was a cognitive technology. It was also magic. I’ve stopped thinking those two sentences argue with each other.

Not yet another technique. Something bigger. Something stronger. One of the most sophisticated tools for thought any culture has ever produced — and most of us who inherited it have forgotten that this is what it ever was.

Let me not overstate it, though. The art is not dead. The ombiasy — our sages and healers, the keepers of the old knowledge — still hold the vakana, and hold it far more deeply than I do; there are things in it I probably can’t yet fathom. What’s been lost is not the tradition itself but the everyday relation most of us had to it — the way it was once common knowledge and is now a specialist’s, kept by a few while the rest of us learned only to be a little afraid of it.

I’d like that to be known again, by the rest of us. That, honestly, is most of why I’m doing any of this.

Here is what is mine, and what is not.

I didn’t invent the vakana — they’re older than my whole line. I didn’t discover that they form a language, either. That reading came from inside the tradition itself, and it had been set down in serious, scientific study years before I had any interest in note-taking or productivity — before I had even started to learn computer science. It was never mine to claim. And the bead itself was never the genius — the genius was the grammar that could take any object, even a foreign one, and make it carry meaning.

So what’s mine is small, and I want to keep it small. Only this: I happened to be standing in an unlikely spot — Malagasy, in love with Lisp, curious about memory — from which you could see that the bead and the list and the remembered room are the same idea wearing different clothes. That’s the whole of it. I noticed a rhyme. And I’m holding it lightly, the way you should hold any reading that hasn’t yet been proven wrong.

The app came after all of this. Not before.

A few posts ago I told you I was building something, and that I’d write about the journey. This is me, finally writing about it — except I owe you an honest correction. The tool isn’t the one I set out to make.

What I wanted was modest. Last year we planted trees, and I started watching the seasons turn — the jacaranda in flower, the odd little weeds that appear in winter and vanish before you’ve learned their names. I wanted to keep a journal of the plants in my garden: how they grew, what they were called in Malagasy, how my people once used them. Nothing on my phone fit the shape of it, so I started building something that would.

And the moment I did, the vakana insight walked in and asked to be tried. I reached for the bead-grammar almost as an experiment — could it hold a plant, a season, a cycle of growth? It could. And not only that: it held nearly everything else I had ever wanted to write down. I came back holding my ancestors’ beads. The journey took a turn I didn’t choose, and I followed it.

It’s called Vakana.mg, and I want to say plainly what it is and isn’t. It is not the vakana. The system is older than any of us and will outlast the app by a long way. What I’ve built is a working surface for it — a way to practise the grammar every day, on the phone most of us actually live inside, without a string of beads in the pocket. It’s an imperfect translation, because anything digital trying to carry something physical is imperfect. My own memory taught me that years ago, against my preferences: for remembering, the physical always wins.

But even imperfect work bears its fruits. The app lets the practice be kept, and re-read, and handed to someone else. And it lets the vakana be met, for the first time, by people who’d otherwise never hear the word.

If I do this honestly, the app won’t be the destination. It’ll be a door. Some who walk through it will be happy with the digital version. Some will want a real bead in the hand. Some will go and study what the ancestors actually did and bring back more than I ever could. All of that is good, and none of it belongs to me.

Lisp and the vakana turn out to be the same shape — a tiny grammar that composes without end — separated only by centuries and by what they’re made of. And neither of them hands you meaning. Lisp gives you a handful of parentheses and lets you build whatever you want; the bead gives you a handful of forms and lets you mean whatever you need. You can no more read the meaning off a stranger’s beads than off a stranger’s code — and I built the app that way on purpose.

I’ve written here before that you have to seek your own understanding — that no one can do that part for you, however much you’d like them to. The vakana is the same conviction, made into a tool.

Because no one can hand you your meaning, either: not a guru, not even a true elder — and not me. Not because the keepers are frauds; they hold real depth, more than I ever will. But a meaning you were handed, and did not make yourself, won’t hold — and an image you choose is the only kind that does. What an elder can give you, what I can give you, is the grammar: small, and old, and ours, and far stronger than I was taught. The meaning, you write yourself.

I’m building it for the few people who, reading this, will feel a small click of recognition.

It isn’t out yet — vakana.mg just says coming soon, which is honest. If you’d like to know when it’s ready, you can leave your email there.

If that’s you — stick around. I’ll be writing about the journey.

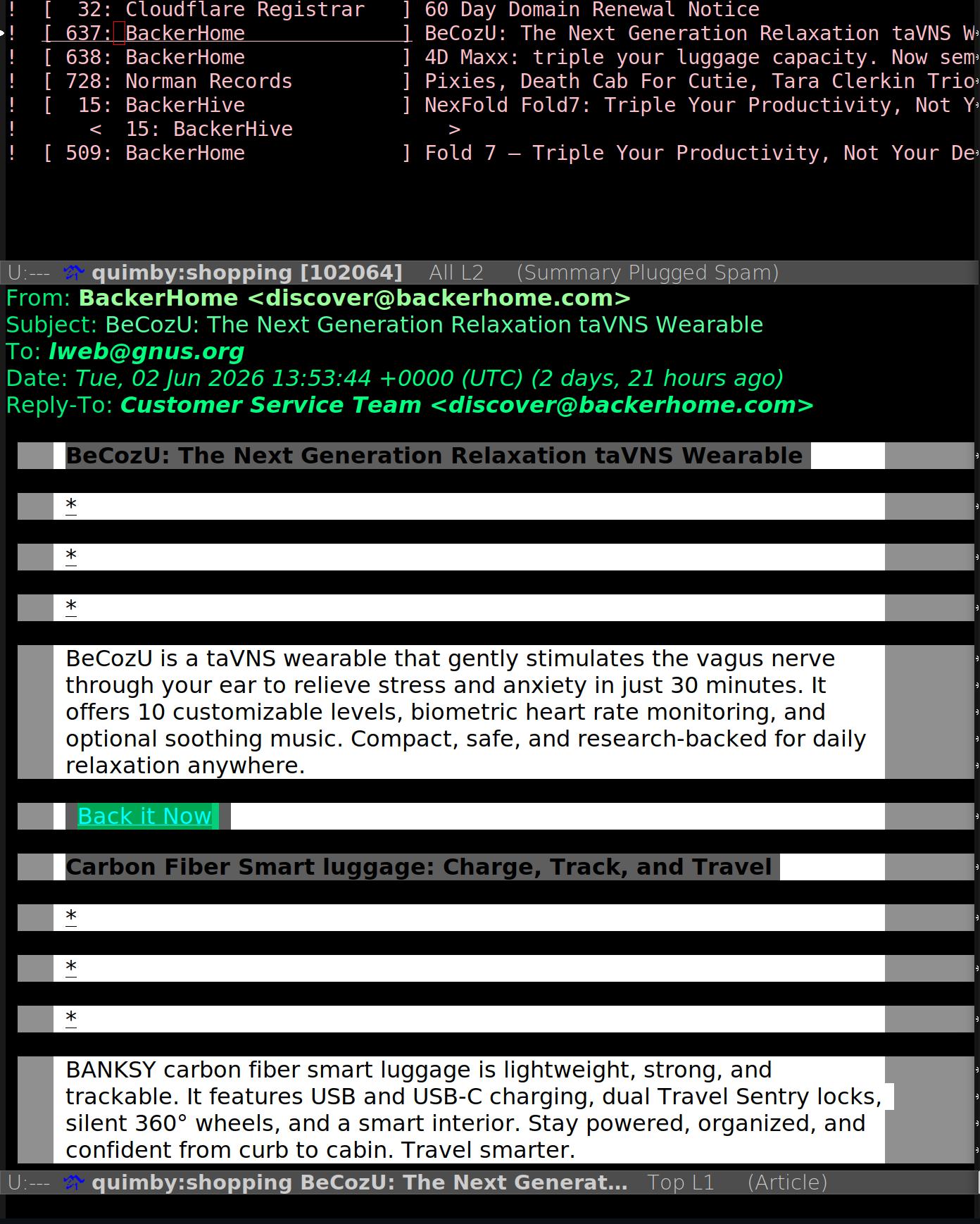

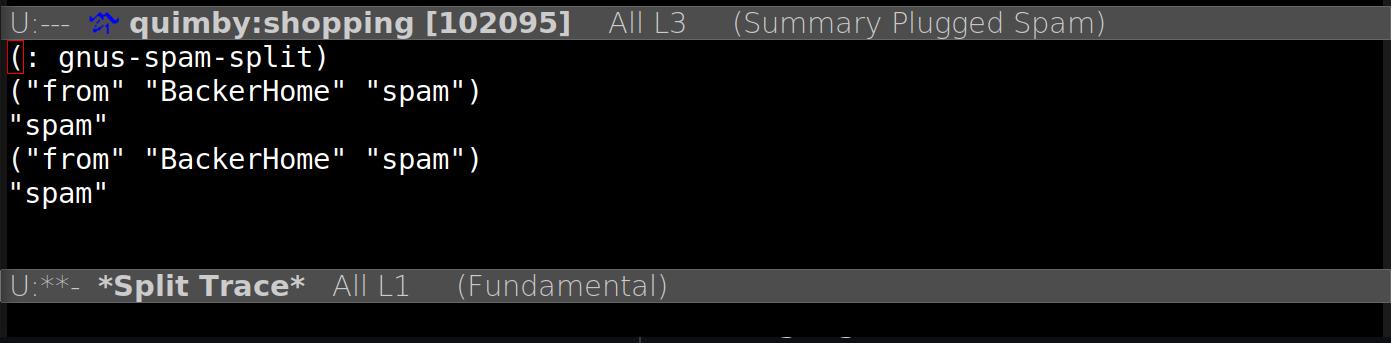

For aesthetic reasons, I’ve opted for an “amber CRT” color scheme with the available 16-color palette in the text interface. This makes my workstation feel like a DOS-era word processor.

For aesthetic reasons, I’ve opted for an “amber CRT” color scheme with the available 16-color palette in the text interface. This makes my workstation feel like a DOS-era word processor.

:quality(85)/https://andros.dev/media/blog/2026/05/chess_emacs_board.png)