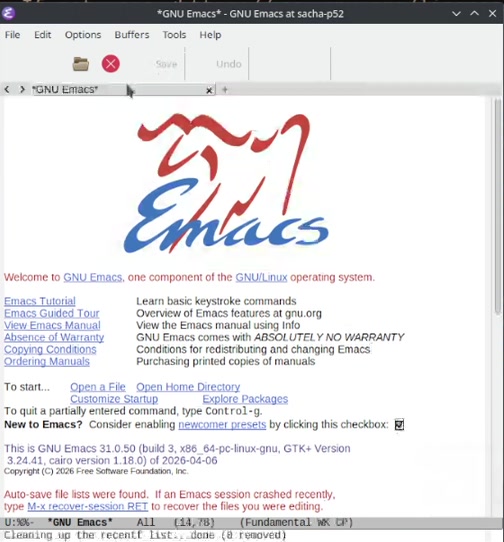

You open your favorite editor, and, out of the box, Emacs writes state

files all over the place. It puts bookmarks in ~/.emacs.d/, drops

#auto-saves# next to every file you edit, leaves .~lockfiles~ in

your projects, scribbles recentf, history, saveplace,

projects, transient/history.el, tramp, network-security.data,

multisession/, url/, image-dired/, erc/, rcirc/... you get

the picture. The features are great, don't get me wrong. The default

locations are what annoy me.

Most of those files are not noise. They are the state that makes

Emacs feel like Emacs across restarts. So you cannot just delete

them. But you also do not want them spread across N different

directories with cryptic names. (I know I don't.)

I want one directory I control, every cache-bound variable pointing

inside it, and a switch to flip it between "persistent inside my

config" and "ephemeral in /tmp". No external package, no naming

scheme to memorize, just a small pattern I can read top to bottom in

init.el.

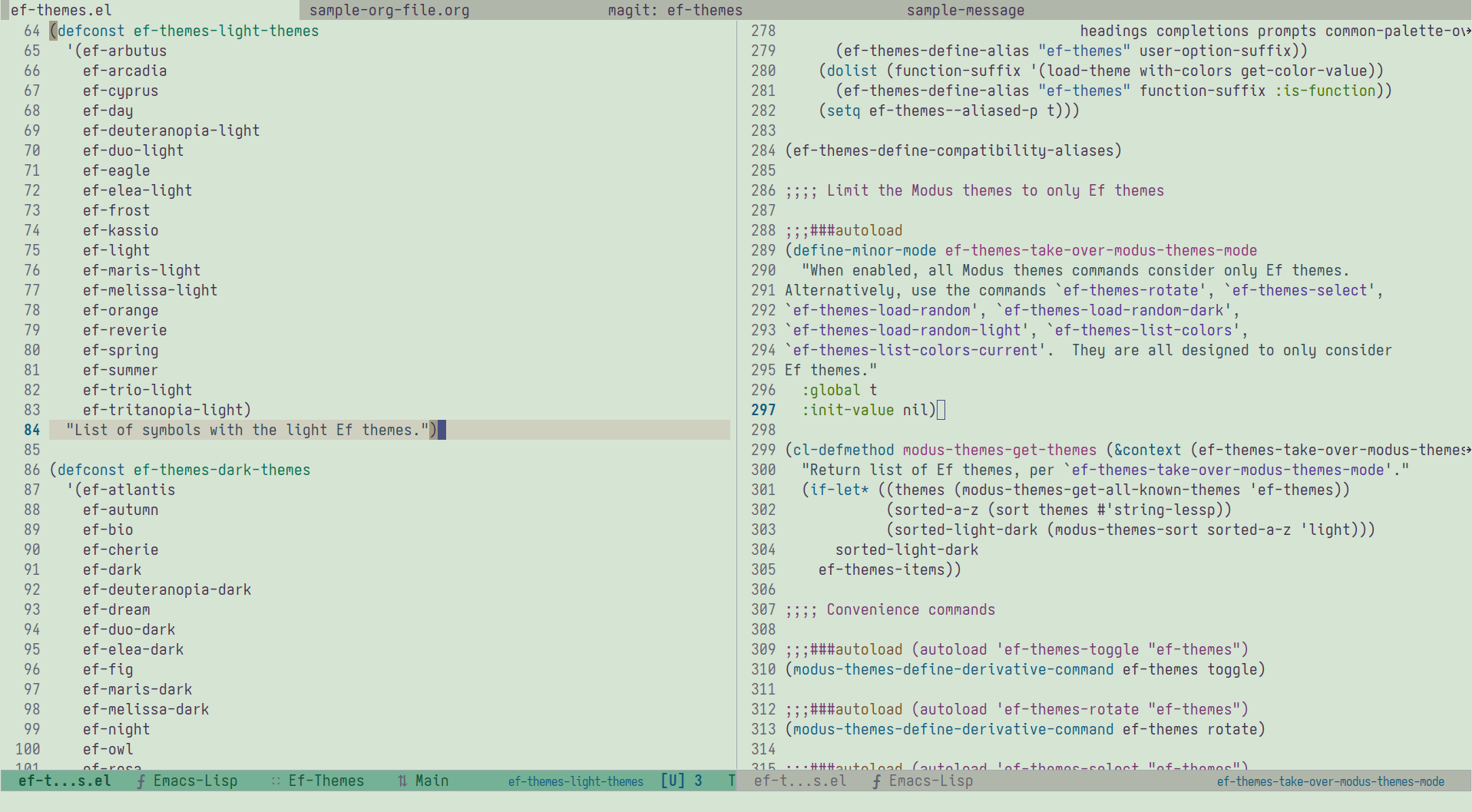

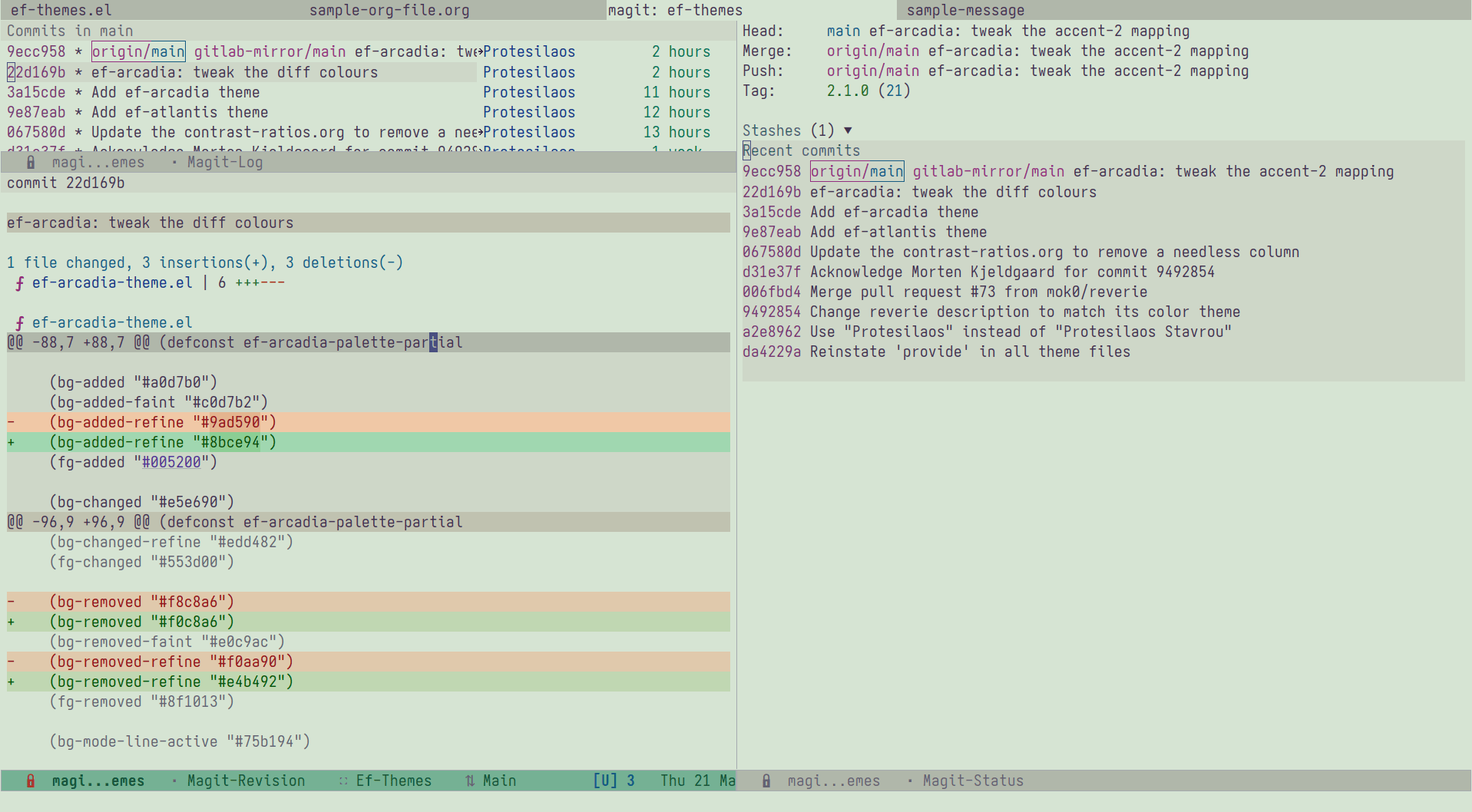

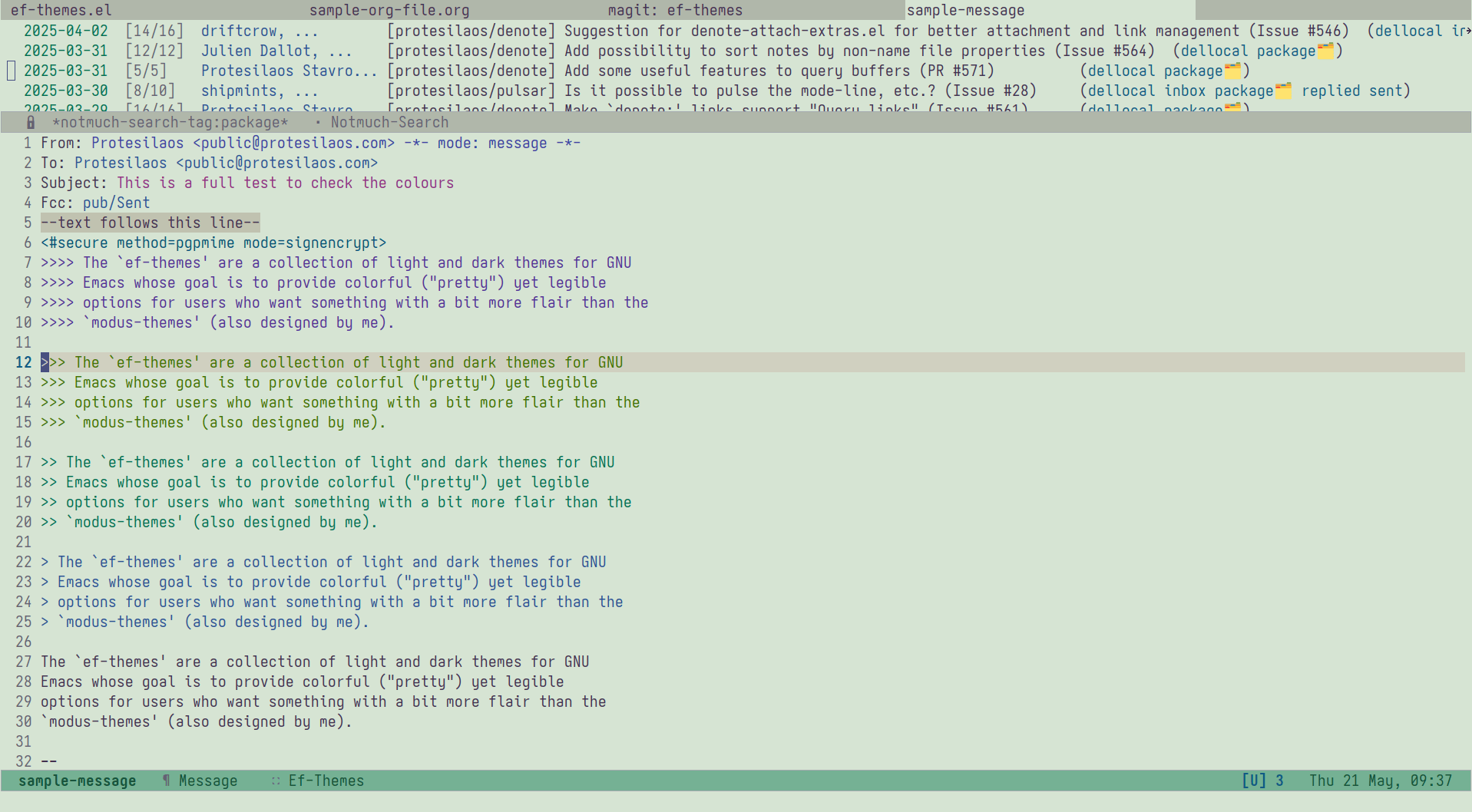

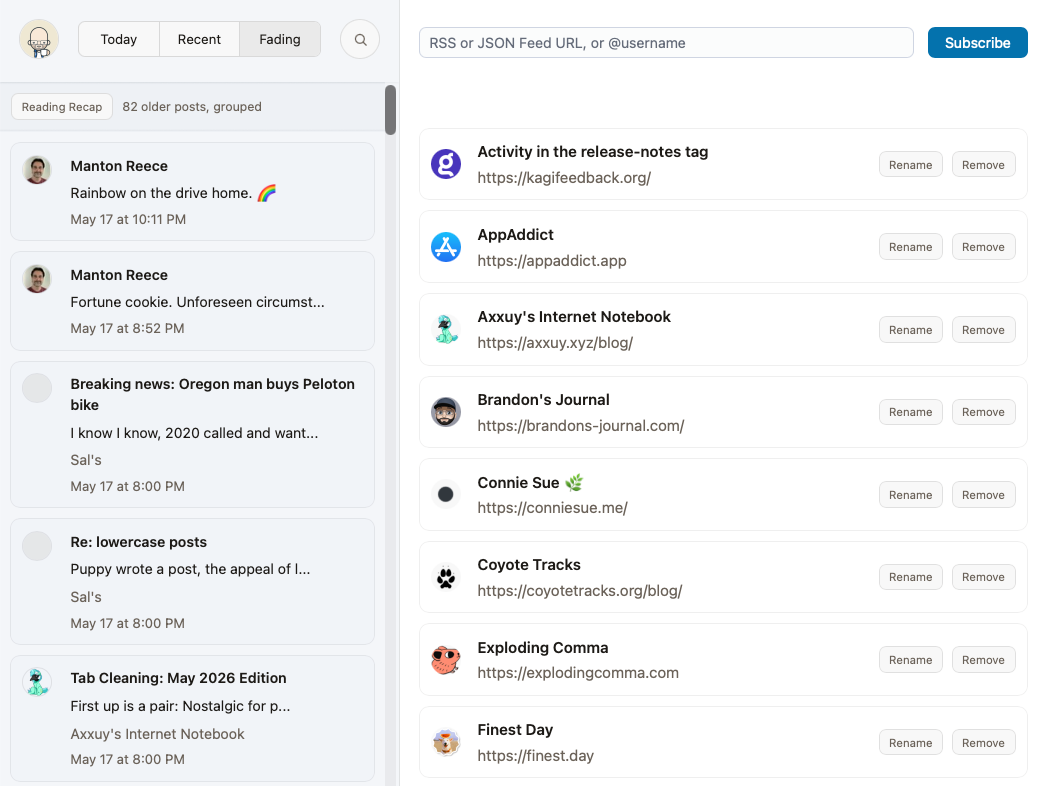

My emacs-solo config used

to hard-code a single relative path under user-emacs-directory for

all these cache files. It worked, but it was inflexible. A user

opened an issue asking if the cache location could be changed without

forking the config, since they wanted those files somewhere else

entirely. So I redesigned it around a defcustom root and an alist

of relative paths, switchable through M-x customize. The version

below is the same idea with the emacs-solo names dropped.

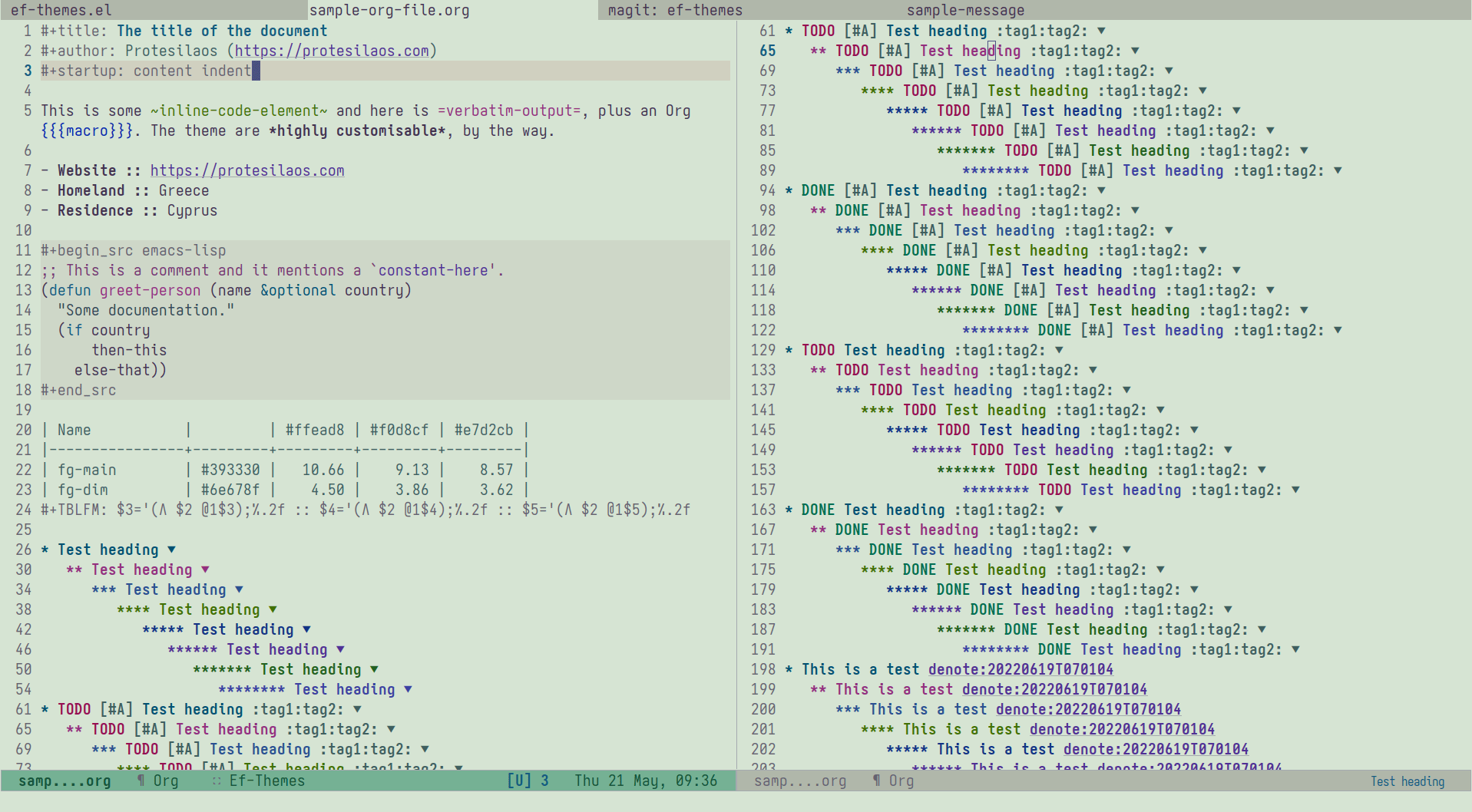

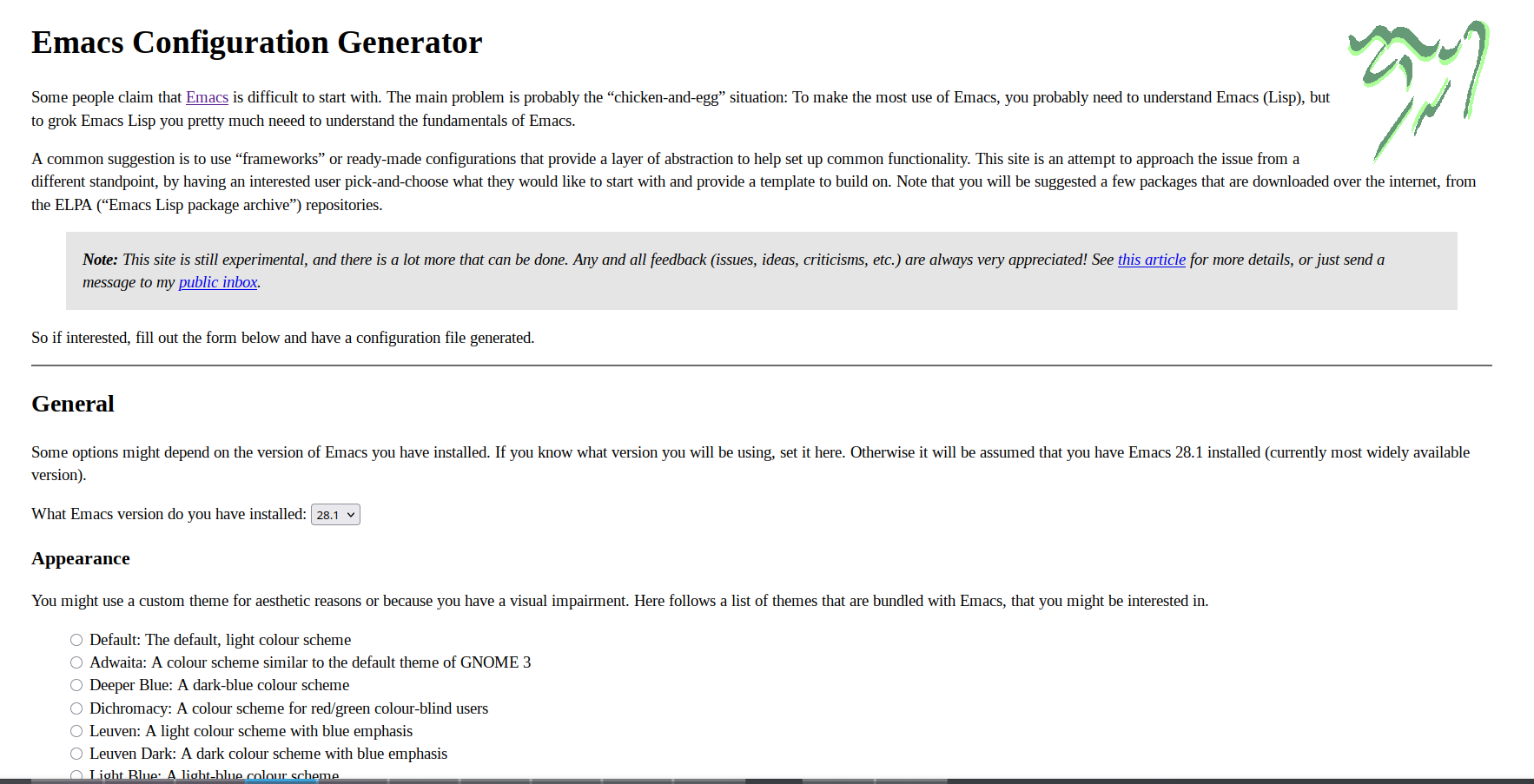

Our goal

Patch these three pieces:

→ cache directory: holds every piece of mutable state Emacs writes

during a session.

→ alist map: lists every variable that needs to be pointed at that

directory.

→ helpers: one resolve a key to an absolute path, and another

pre-creates every directory so we never get "no such file or

directory" warnings at startup.

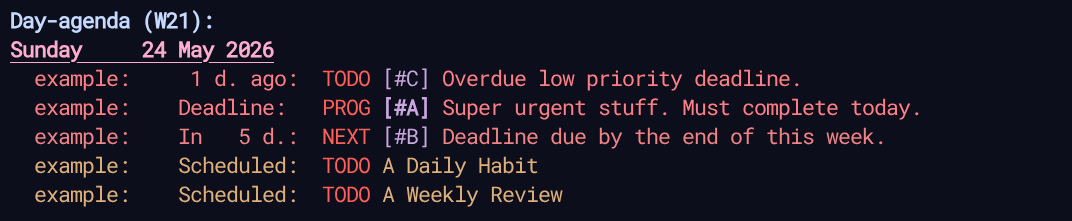

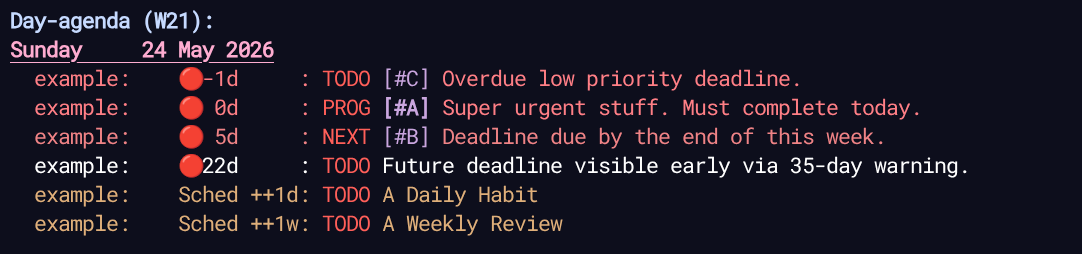

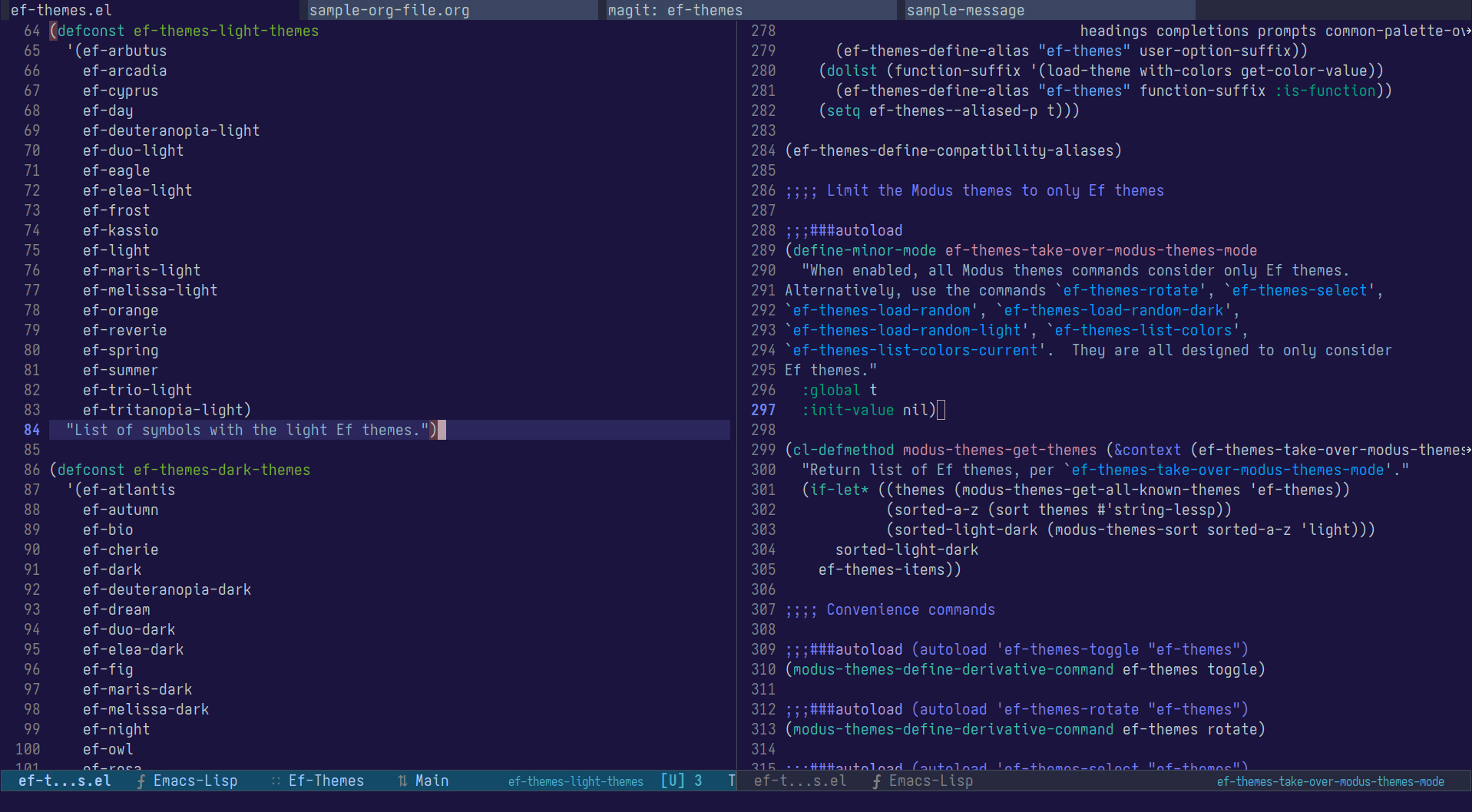

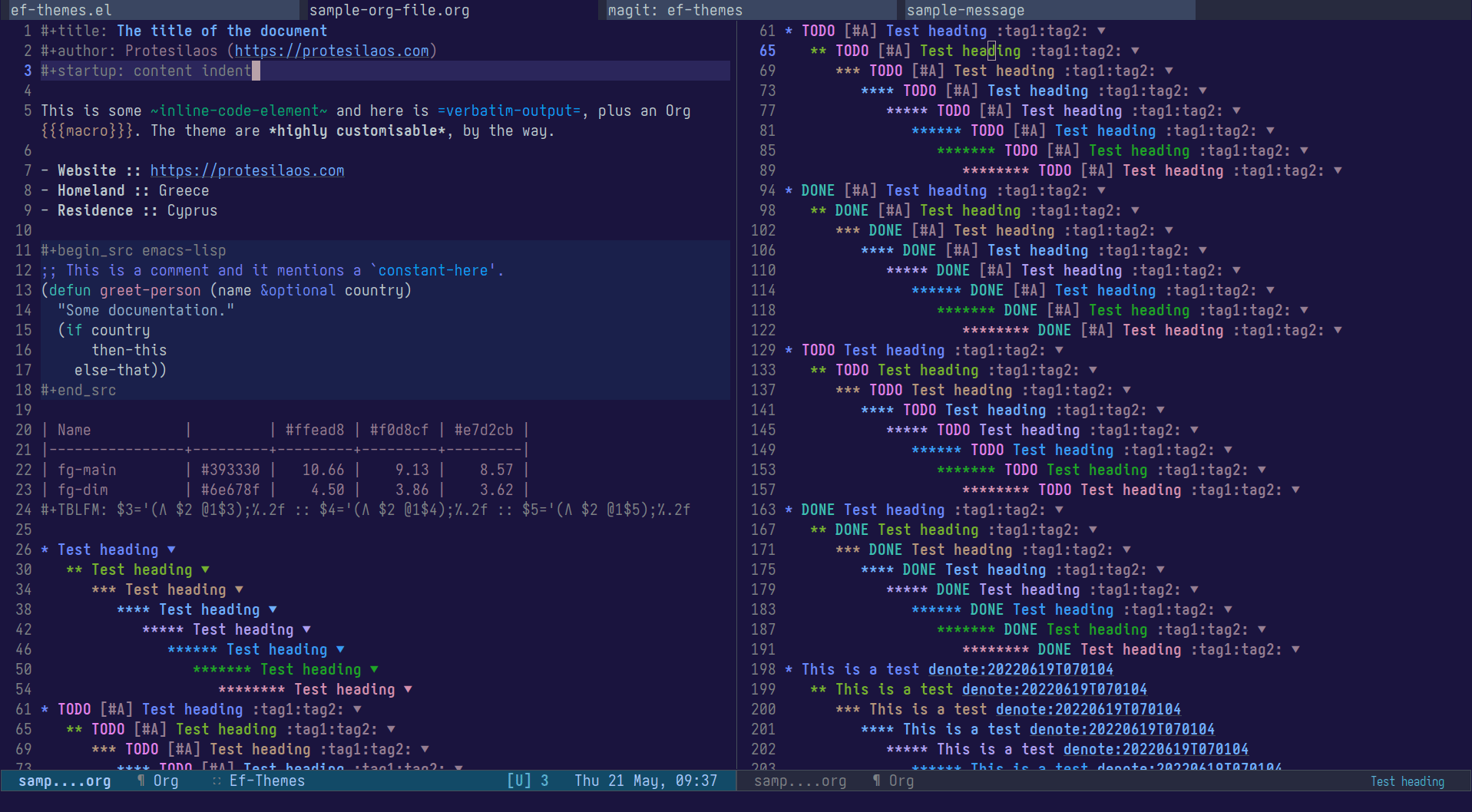

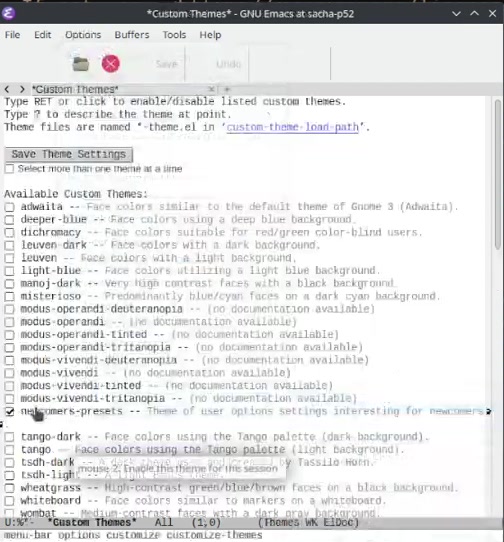

In practice, a .emacs.d directory ends up looking like this:

Our switchable base directory

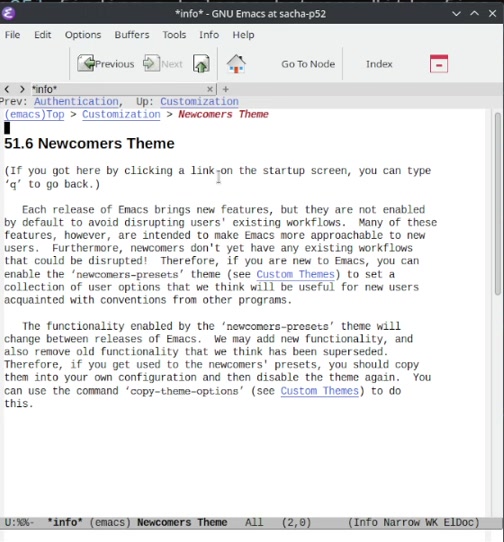

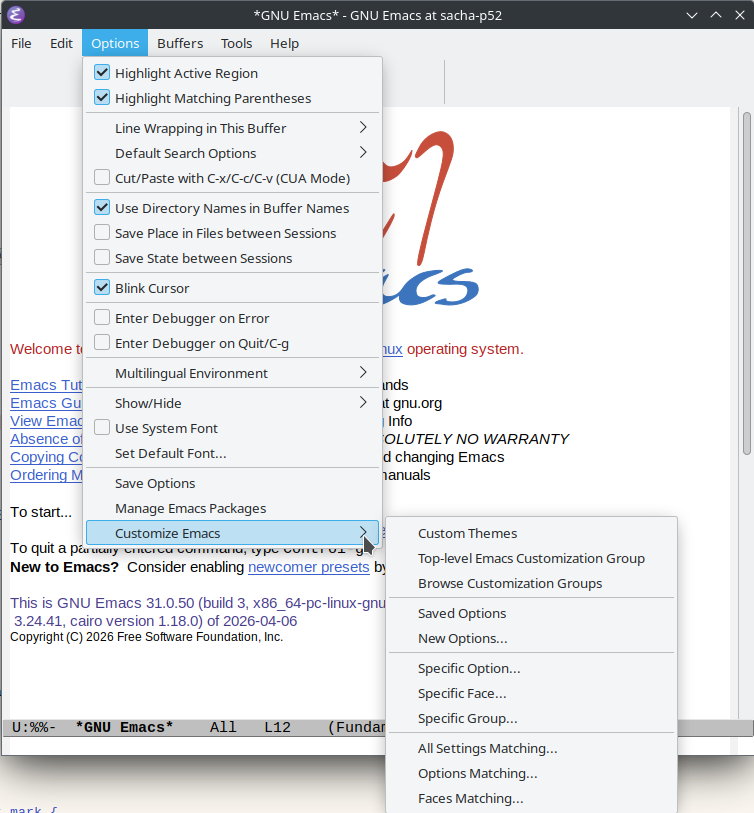

Start with a defcustom for the root. Using defcustom (instead of

defvar) lets you change the value through M-x customize without

editing init files, and the choice persists in custom.el:

The default puts everything under ~/.emacs.d/cache/, which keeps

the rest of ~/.emacs.d/ clean and lets you back up or delete the

whole cache as one directory. The /tmp/ preset is for sessions that

should leave no trace. The directory choice lets you point at any

custom path.

I use user-emacs-directory instead of a literal ~/.emacs.d/

because Emacs 29 added the --init-directory flag, which lets you

launch Emacs against any config directory. I use it constantly to try

other people's configs side by side with my own without touching

~/.emacs.d/. Anchoring on user-emacs-directory means the cache

follows whichever config Emacs was started with, instead of every

alternate config writing into the default ~/.emacs.d/ and stepping

on each other.

In my early-init.el I already load custom-file, but I reload it

here too. customize writes its changes to custom.el immediately,

so if you change the cache directory mid-session through M-x customize and then restart, you want the new value to apply before

the rest of init.el runs and calls my/cache--path:

The when guard is there because in a brand-new config custom-file

is nil, and (load nil 'noerror 'nomessage) signals

(wrong-type-argument stringp nil) and aborts the rest of

init.el. The 'noerror flag suppresses file-error, not type

errors.

This whole reload is a bit of a hack. Loading custom-file twice

during startup just to get a defcustom value hot before the next

form is not what Emacs intends. It only matters the first time you

switch presets. Without it, you would wonder why your new

/tmp/emacs-cache/ is empty while your old ~/.emacs.d/cache/ keeps

receiving writes.

Our single alist as the source of truth

The next piece is the mapping from keys to relative paths.

How you organize this alist is your call. The list below is what I

wire through my/cache-path in my own config, but plenty of users

will split things differently. I deliberately keep tree-sitter/,

eln-cache/, and eshell/ outside this alist. tree-sitter and

eln-cache are populated by long-running, expensive processes

(grammar compilation, native compilation) that I want to survive when

I reset my cache, so they live next to the config under

user-emacs-directory directly. eshell carries my command history

and aliases, which I treat more like dotfiles than throwaway state.

Your workflow will pull these differently, and that is fine.

The convention I follow: keys are usually the names of the Emacs

variables they will fill. That keeps grep useful for both the

definition and the usage.

A trailing slash on the value marks a directory (this matches

directory-name-p in Emacs). No trailing slash means a file. The

next helper uses that distinction.

Our helpers

The first helper turns a key into an absolute path:

assq does an eq lookup on symbol keys. The unless rel branch is

there because typos in :custom blocks are silent otherwise: you

would get nil and Emacs would happily write to nil, which fails

in confusing ways. Better to error at config load.

The second helper pre-creates every directory the alist mentions, so

we never get "directory does not exist" warnings from packages on

first run:

If the entry is a directory (directory-name-p returns t for

values ending in /), it is created as-is. If the entry is a file,

only the file's parent directory is created.

The t argument to make-directory is the "parents" flag, the

equivalent of mkdir -p. So transient/history.el correctly creates

<cache>/transient/ even though we never list it explicitly.

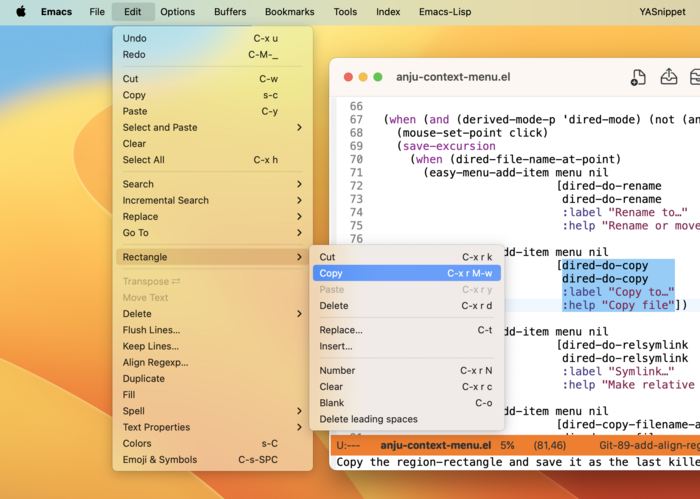

Wiring it into use-package :custom

Now every cache-bound variable points at our helper. Inside a

use-package emacs block (or wherever you set built-ins):

On the three settings at the bottom:

→ create-lockfiles nil and make-backup-files nil are personal

taste. Lockfiles warn another Emacs not to clobber your buffer, but

they break frontend tooling that watches directories for changes

(TypeScript, Vite, esbuild). The tilde backups I find redundant

with modern VCS and auto-save.

→ auto-save-default t stays on because auto-save is what

rescues you when Emacs crashes. We just want it somewhere else.

Redirecting auto-saves

Auto-save needs two extra settings because Emacs uses path transforms

for it instead of single-value variables:

auto-save-list-file-prefix controls where the "list of files with

pending auto-saves" lives. This is what M-x recover-session reads.

Pointing it at our auto-saves/sessions/ directory means you can

still recover after a crash, without ~/.emacs.d/auto-save-list/

cluttering your config.

auto-save-file-name-transforms is a list of (REGEX REPLACEMENT UNIQUIFY) triples. The t at the end is the uniquify flag, which

encodes the original path into the filename so two files with the

same name in different directories do not collide in the auto-save

folder.

Both end up in <cache>/auto-saves/.

TRAMP, viper, and other late bindings

Some variables are not safely set in :custom, because they are

defined inside packages that load later. For those, use setopt

(which respects defcustom setters) inside the :config block:

setopt is the modern equivalent of setq for customizable

variables. It runs any :set function the variable defines, which

tramp-persistency-file-name does (it triggers a reload). Plain

setq would skip that and leave TRAMP confused.

What you get

→ ~/.emacs.d/cache/ (or wherever you point it) contains

everything. du -sh tells you how much state Emacs is hoarding.

rm -rf resets it all without touching your config.

→ M-x customize-variable RET my/cache-directory lets you flip

between persistent and ephemeral modes without editing init

files. Useful for screencasts where you want zero history showing,

or for testing whether a problem is config or state.

→ New packages are a two-line change. When you adopt, say,

newsticker, you add one entry to my/cache-paths and one

(my/cache--path 'newsticker-dir) line in the package's

:custom. The directory is auto-created on next restart.

→ You can grep for it. grep my/cache--path init.el lists every

place Emacs writes state, and the alist tells you what kind.

The complete code

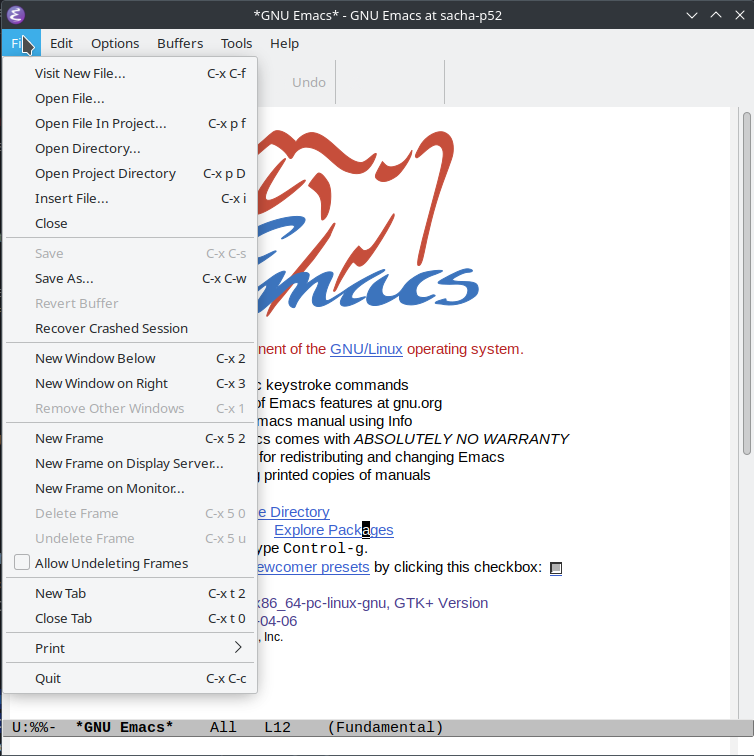

Here is everything in one block. Copy this into some temporary folder

inside the init.el file. After that, cd into this temp directory

and run emacs --init-directory=./. You can them navigate files, use

Emacs features and check where the created files end up to.

Adapt the alist to the packages you actually use.

Other Resources

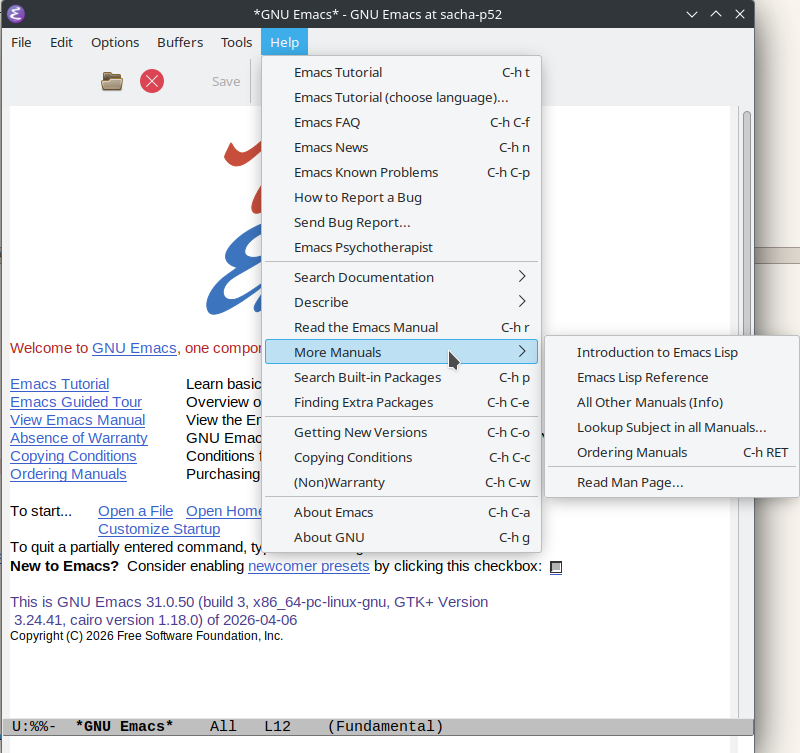

If you want to read more on the topic:

→ https://www.gnu.org/software/emacs/manual/html_node/emacs/Auto-Save-Files.html

→ https://www.gnu.org/software/emacs/manual/html_node/elisp/Variable-Definitions.html#index-defcustom

If you would rather install a package than maintain your own alist,

the no-littering

package is a popular ready-made alternative that covers a wide set of

variables out of the box.

The version of this code I actually run, alist entries and all, lives

in my emacs-solo config

under the CACHE PATHS heading of init.el. If you end up adapting

this for your own setup, I would love to hear which variables you

added that I forgot.